Recently, NVIDIA revealed the Hopper H100 NVL GPU, which is made specifically for ChatGPT and includes 94 GB of HBM3 memory.

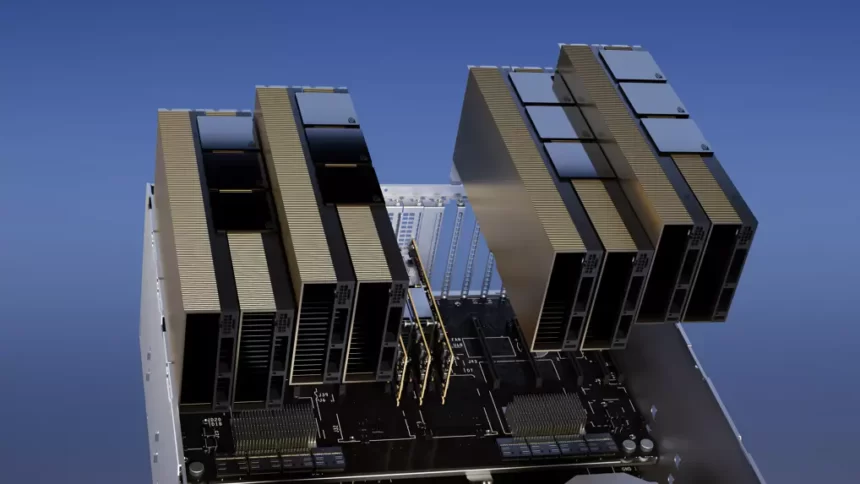

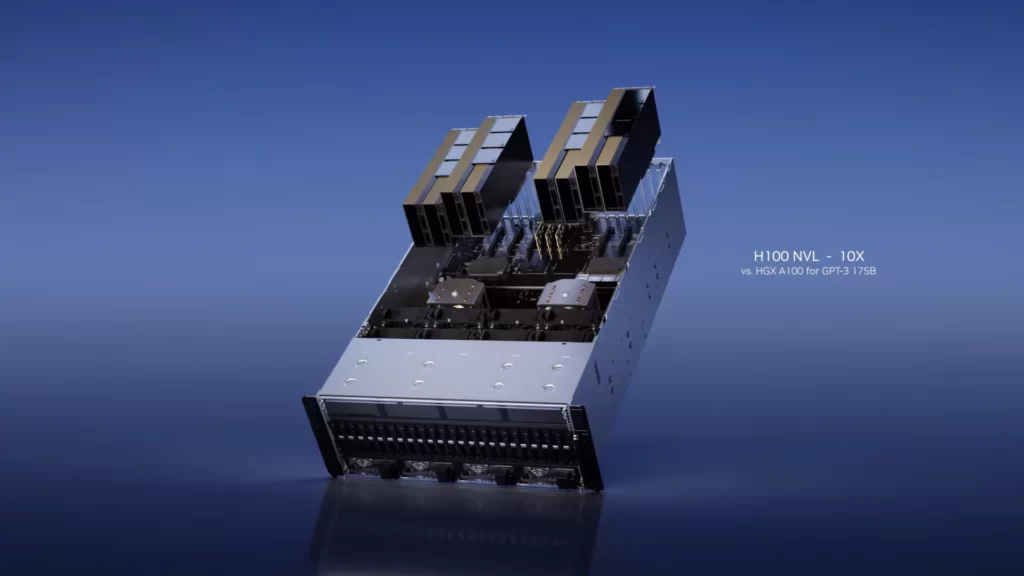

A dual-GPU NVLINK link with 94 GB of HBM3e memory on each chip is believed to be a feature of the NVIDIA Hopper GPU-powered H100 NVL PCIe graphics card. The GPU has the capacity to handle 175 billion ChatGPT parameters simultaneously. When compared to a conventional DGX A100 server with up to 8 GPUs, four of these GPUs in a single server may provide up to 10 times the performance increase.

Contrary to the H100 SXM5 configuration, the H100 PCIe provides reduced specs, with 114 of the 144 SMs on the GH100 GPU and 132 SMs on the H100 SXM GPU that are activated. The chip itself delivers 48 TFLOPs of FP64 computational capability, 3200 FP8, 1600 TF16, 800 FP32, and 1600 FP8. 456 Tensor & Texture Units are included as well.

The H100 PCIe should run at lower clock speeds due to its lower peak computing horsepower; as a result, it has a 350W TDP as opposed to the SXM5 variant’s twice that of 700W. However, the PCIe card will still include 80 GB of memory with a 5120-bit bus interface, although in the HBM2e variant (>2 TB/s bandwidth).

The H100 is rated at 48 non-tensor FP32 TFLOPs whereas the MI210 has a peak FP32 compute power of 45.3 TFLOPs. The H100 is capable of generating up to 800 TFLOPs of FP32 horsepower using Tensor and Sparsity operations. In addition, the H100 has 80GB of memory capacity as opposed to the MI210’s 64 GB. It seems that NVIDIA is charging more for its superior AI/ML capabilities.